When working with a computer, we typically give it some form of instruction. The way computers work is based on the binary number system; they essentially use a two-symbol system consisting of one and zero.

The computer understands what we are saying by converting our instructions into a series of 0s and 1s.

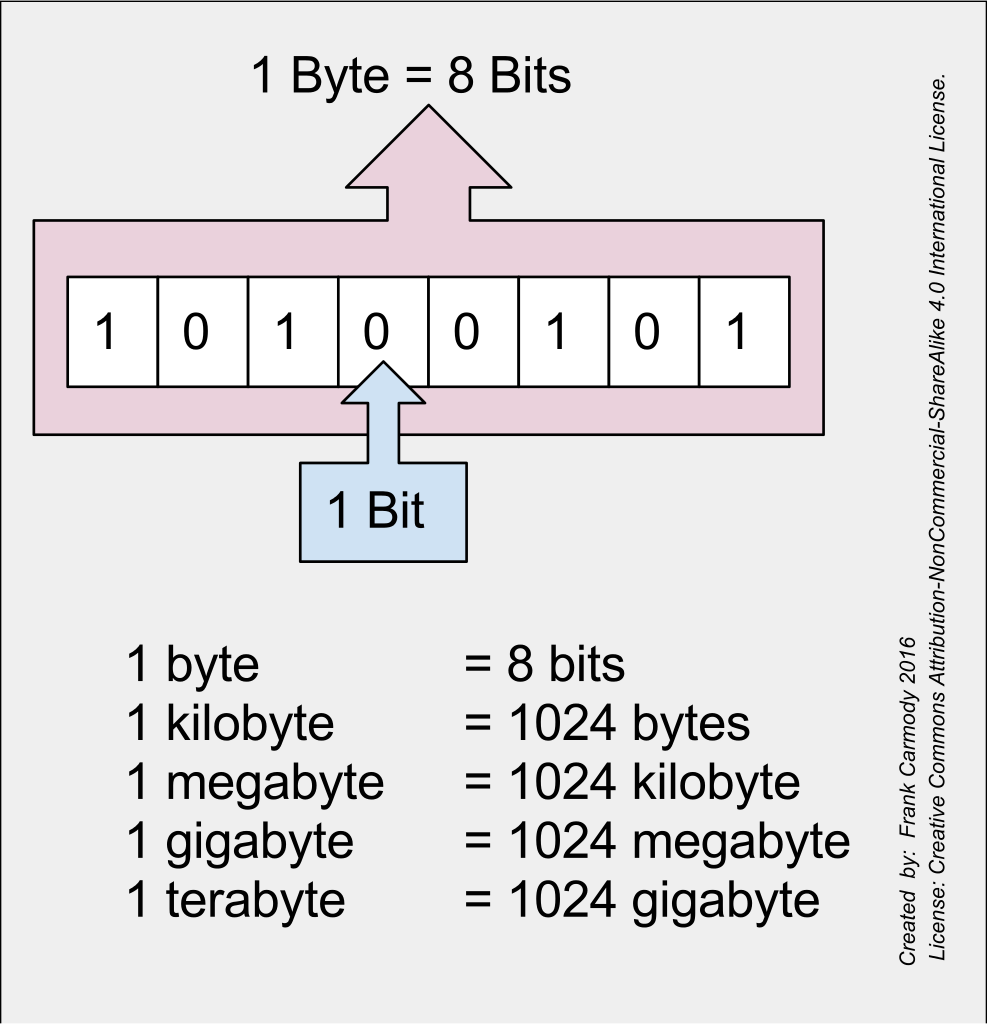

Bit (Binary Digit)

In computing, the smallest unit of information is a binary digit, which has a value of one or zero. The word bit is an abbreviation of the English word binary digit.

Bits are the fundamental building block of digital information, and their accurate transmission is essential to the reliable operation of computers. Depending on the electrical current flow through the wires, they are represented by either a one or a zero. The likelihood of the two signals being mixed is very low, as these electronic signals are highly tolerant of interference.

One bit of information can be stored in two ways, and two bits of information can be stored in four ways. Experimenting with this theory can be fun; imagine that you have blue and white shirts and shorts. There are four possible combinations: white shirt with white shorts, blue shirt with blue shorts, blue shirt with white shorts, or white shirt with blue shorts.

To represent more complex information, larger units of bits are necessary.

- In three bits, you can store eight types of information.

- In four bits, you can store sixteen kinds of information.

- In eight bits, you can store 256 types of information.

A sequence of eight bits is called a byte. For example, the answer to 8 toss-up questions carries a total of 8 bits or 1 byte of information. Represented by numbers: 11101010 – this signal sequence corresponds to one byte. One byte can store one letter on a computer.

Byte

The computer works with binary digital signals. The smallest unit of data is the bit, which can have a value of either one or zero. A sequence of eight bits is called a byte. Computers can store millions of bytes, so we use variable numbers to describe the amount of data that can be stored.

Kilobyte

One of the units of the byte is the kilobyte. The Greek word kilo means a thousand times. However, unlike in everyday life, in computing, this multiplication factor is not 1000 but 1024 because computers are not based on the decimal system but the binary system. This difference arises from the way that electronic circuits represent information. Therefore, 1024 bytes equals one kilobyte, and the abbreviation for kilobyte is KB.

Megabyte

A megabyte is composed of 1,024 kilobytes. Its name is derived from the Greek word “mega,” meaning million times, or a thousand times a thousand. A megabyte is a unit of measurement used in computing that is 1024 times the size of a kilobyte. The abbreviation for megabyte is MB.

This means that 1 MB = 1024 KB = 1024 x 1024 = 1 048 576 bytes. (Because we use a binary number system, the unit of change is officially called a megabyte in today's computing, and the abbreviation is MB.)

Gigabyte

A gigabyte is a unit of measurement that equates to 1024 megabytes. This word – Giga – is Greek and means billion times. In computer science, as in the computing meanings of kilo- and mega-, Giga is 1024 times 1024 bytes. The gigabyte (GB) is a unit of measurement for digital information. In other words, it's the amount of data that can be stored on a device. One gigabyte equals 1024 megabytes, 1 048576 kilobytes, or 1 073 741 824 bytes.

Terabyte

The variable amount of gigabytes is the terabyte. Tera is also a Greek word meaning a trillion times. So, as in computer science, the meaning of kilo-, mega-, giga-, tera means 1024 times 1024 times, 1024 times 1024 bytes. The abbreviation for terabyte is TB. So, 1 TB = 1024 GB = 1 048 576 MB = 1 073 741 824 KB = 1099511627776 bytes. (Because we use a binary number system, the unit of change is officially called a terabyte in today's computing and the abbreviation is TB.)

Our home computers still store around 250 GB of data, but this amount is rapidly growing, and some computers come with multiple Terrabte size hard drives.